GPFS安装实施详解

1、GPFS规划

在虚拟机上安装2台RedHat 5 linux,系统分配8G硬盘,令每台节点分配2个2G的硬盘具体规划如表:

2、GPFS实施

2.1、软件需求

辅助软件:

Vmvare 7.0

redhat 5

libstdc++

compat-libstdc++-296

compat-libstdc++-33

libXp

imake

gcc-c++

kernel

kernel-headers

kernel-devel

kernel-smp

kernel-smp-devel

xorg-x11-xauth

GPFS软件需求

gpfs.base

gpfs.msg.en_US

gpfs.docs

gpfs.gp

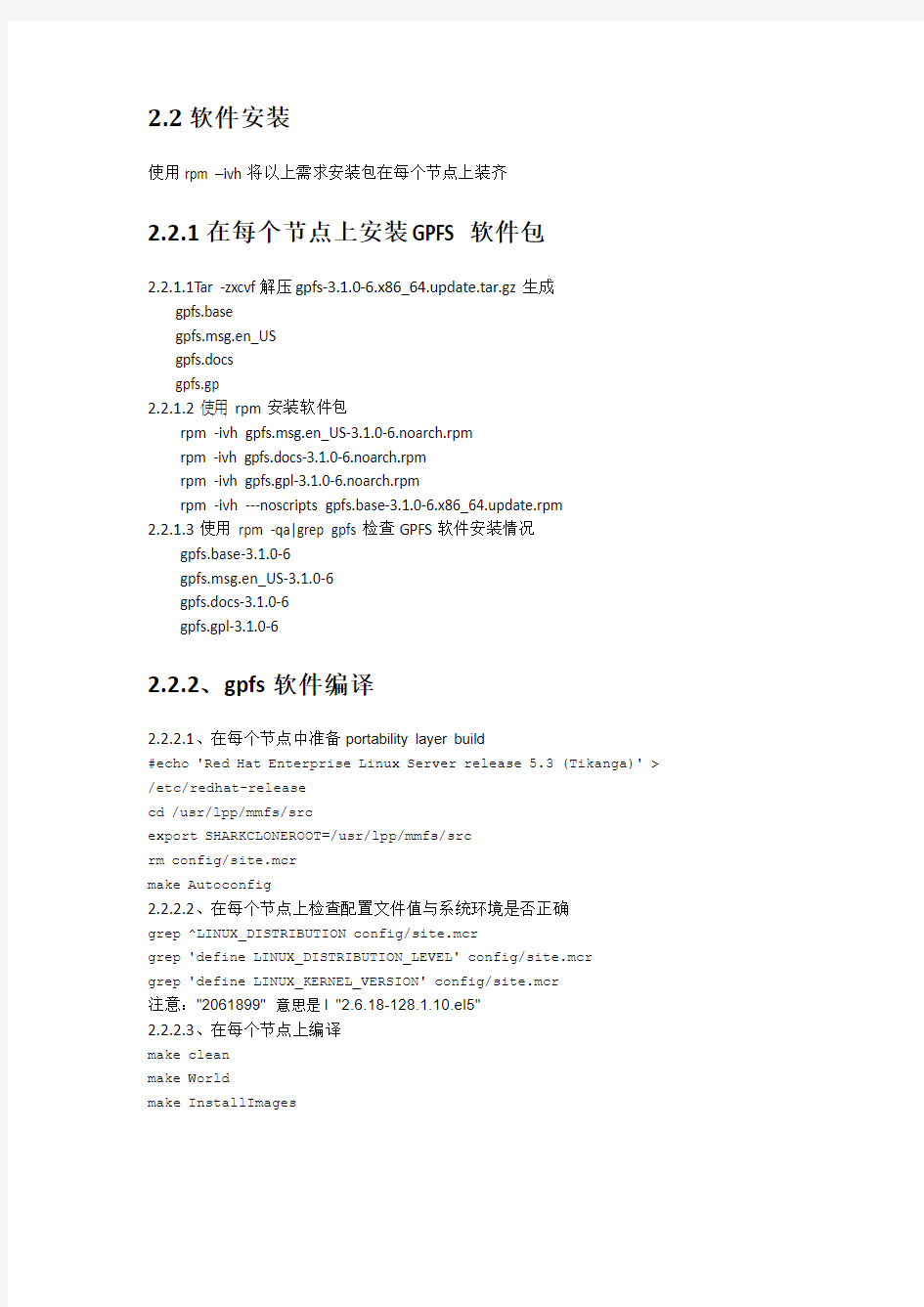

2.2软件安装

使用rpm –ivh将以上需求安装包在每个节点上装齐

2.2.1在每个节点上安装GPFS软件包

2.2.1.1T ar -zxcvf解压gpfs-

3.1.0-6.x86_6

4.update.tar.gz生成

gpfs.base

gpfs.msg.en_US

gpfs.docs

gpfs.gp

2.2.1.2使用rpm安装软件包

rpm -ivh gpfs.msg.en_US-3.1.0-6.noarch.rpm

rpm -ivh gpfs.docs-3.1.0-6.noarch.rpm

rpm -ivh gpfs.gpl-3.1.0-6.noarch.rpm

rpm -ivh ---noscripts gpfs.base-3.1.0-6.x86_64.update.rpm

2.2.1.3使用rpm -qa|grep gpfs检查GPFS软件安装情况

gpfs.base-3.1.0-6

gpfs.msg.en_US-3.1.0-6

gpfs.docs-3.1.0-6

gpfs.gpl-3.1.0-6

2.2.2、gpfs软件编译

2.2.2.1、在每个节点中准备portability layer build

#echo 'Red Hat Enterprise Linux Server release 5.3 (Tikanga)' > /etc/redhat-release

cd /usr/lpp/mmfs/src

export SHARKCLONEROOT=/usr/lpp/mmfs/src

rm config/site.mcr

make Autoconfig

2.2.2.2、在每个节点上检查配置文件值与系统环境是否正确

grep ^LINUX_DISTRIBUTION config/site.mcr

grep 'define LINUX_DISTRIBUTION_LEVEL' config/site.mcr

grep 'define LINUX_KERNEL_VERSION' config/site.mcr

注意:"2061899" 意思是l "2.6.18-128.1.10.el5"

2.2.2.3、在每个节点上编译

make clean

make World

make InstallImages

2.2.3、在每个节点上编译.bashrc文件,建立GPFS命令环境变量

On each node, edit the PATH,

vi ~/.bashrc

添加

PATH=$PATH:/usr/lpp/mmfs/bin

立即生效

source ~/.bashrc

2.2.4、创建节点描述文件

Vi /tmp/gpfs_node

10.3.164.24 node1:quorum-manager

10.3.164.25 node2:quorum-manager

2.2.5、创建磁盘描述文件

/dev/hdb:node1:::1:GPFSNSD1

2.2.6、创建主机信任文件

2.2.6.1、以下命令分别在node1和node2上都执行一遍

#mkdir ~/.ssh

#chmod 700 ~/.ssh

#ssh-keygen -t rsa

#ssh-keygen -t dsa

2.2.6.2 、在node1上执行以下命令

#cat ~/.ssh/id_rsa.pub >> ~/.ssh/authorized_keys

#cat ~/.ssh/id_dsa.pub >> ~/.ssh/authorized_keys

#ssh node2 cat ~/.ssh/id_rsa.pub >> ~/.ssh/authorized_keys

#ssh node2 cat ~/.ssh/id_dsa.pub >> ~/.ssh/authorized_keys

#scp ~/.ssh/authorized_keys node2:~/.ssh/authorized_keys

2.2.6.3、测试两个节点的连接等效性

#ssh node1 date

#ssh node2 date

2.2.7、确认连接磁盘

#fdisk –l

Disk /dev/sda: 16.1 GB, 16106127360 bytes

255 heads, 63 sectors/track, 1958 cylinders

Units = cylinders of 16065 * 512 = 8225280 bytes

Device Boot Start End Blocks Id System

/dev/sda1 * 1 13 104391 83 Linux

/dev/sda2 14 1958 15623212+ 8e Linux LVM

Disk /dev/hdb: 2 GB, 2736369664 bytes

64 heads, 32 sectors/track, 10239 cylinders

Units = cylinders of 2048 * 512 = 248576 bytes

3、GPFS安装

3.1、建立集群

3.1.1、创建集群文件系统node1

mmcrcluster -n /tmp/gpfs_node -p node1 -s node2 -r /usr/bin/ssh -R /usr/bin/scp

命令显示:

mmcrcluster: Processing node 1

mmcrcluster: Processing node2

mmcrcluster: Command successfully completed

mmcrcluster: Propagating the cluster configuration data to all affected nodes. This is an asynchronous process.

3.1.2、显示集群文件系统

mmlscluster

GPFS cluster information

========================

GPFS cluster name: node1

GPFS cluster id: 13882348004399855353

GPFS UID domain: node1

Remote shell command: /usr/bin/ssh

Remote file copy command: /usr/bin/scp

GPFS cluster configuration servers:

-----------------------------------

Primary server: node1

Secondary server:

Node Daemon node name IP address Admin node name Designation

1 node1 10.3.164.24 node1 quorum-manager

2 node2 10.3.164.25 node2 quorum-manager

3.2、创建NSD

3.2.、使用mmcrnsd创建NSD

mmcrnsd -F /tmp/gpfs_disk -v yes

命令显示:

mmcrnsd: Processing disk hdb

mmcrnsd: Propagating the cluster configuration data to all

affected nodes. This is an asynchronous process.

3.2.2、显示NSD

mmlsnsd –m

Disk name NSD volume ID Device Node name Remarks

-------------------------------------------------------------------------------

gpfs1nsd C0A801F54A9B3732 /dev/hdb node1 primary node

gpfs1nsd C0A801F54A9B3732 /dev/hdb node2

3.3、启动GPFS文件系统

mmstartup –a

Mon Aug 31 10:37:48 CST 2009: mmstartup: Starting GPFS ...

3.4、查看GPFS文件系统状态

mmgetstate -a

Node number Node name GPFS state

------------------------------------------

1 node1 active

2 node2 active

3.5、建立文件系统

#mkdir /gpfs 建立挂载点

#./bin/mmcrfs /gpfs gpfsdev -F /tmp/gpfs_disk -A yes -B 1024K -v yes

命令显示:

The following disks of gpfsdev will be formatted on node node1:

gpfs1nsd: size 2241856 KB

Formatting file system ...

Disks up to size 2 GB can be added to storage pool 'system'.

Creating Inode File

Creating Allocation Maps

Clearing Inode Allocation Map

Clearing Block Allocation Map

Completed creation of file system /dev/gpfsdev.

mmcrfs: Propagating the cluster configuration data to all

affected nodes. This is an asynchronous process.

3.6、检查文件系统

#cat /etc/fstab

………………………

/dev/gpfsdev /gpfs gpfs rw,mtime,atime,dev=gpfsdev,autostart 0 0 Df-g

/dev/hdb /gpfs