A mathematical theory of communication

Reprinted with corrections from The Bell System Technical Journal,

V ol.27,pp.379–423,623–656,July,October,1948.

A Mathematical Theory of Communication

By C.E.SHANNON

I NTRODUCTION

HE recent development of various methods of modulation such as PCM and PPM which exchange

bandwidth for signal-to-noise ratio has intensi?ed the interest in a general theory of communication.A basis for such a theory is contained in the important papers of Nyquist1and Hartley2on this subject.In the present paper we will extend the theory to include a number of new factors,in particular the effect of noise in the channel,and the savings possible due to the statistical structure of the original message and due to the nature of the?nal destination of the information.

The fundamental problem of communication is that of reproducing at one point either exactly or ap-proximately a message selected at another point.Frequently the messages have meaning;that is they refer to or are correlated according to some system with certain physical or conceptual entities.These semantic aspects of communication are irrelevant to the engineering problem.The signi?cant aspect is that the actual message is one selected from a set of possible messages.The system must be designed to operate for each possible selection,not just the one which will actually be chosen since this is unknown at the time of design.

If the number of messages in the set is?nite then this number or any monotonic function of this number can be regarded as a measure of the information produced when one message is chosen from the set,all choices being equally likely.As was pointed out by Hartley the most natural choice is the logarithmic function.Although this de?nition must be generalized considerably when we consider the in?uence of the statistics of the message and when we have a continuous range of messages,we will in all cases use an essentially logarithmic measure.

The logarithmic measure is more convenient for various reasons:

1.It is practically more useful.Parameters of engineering importance such as time,bandwidth,number

of relays,etc.,tend to vary linearly with the logarithm of the number of possibilities.For example,

adding one relay to a group doubles the number of possible states of the relays.It adds1to the base2

logarithm of this number.Doubling the time roughly squares the number of possible messages,or

doubles the logarithm,etc.

2.It is nearer to our intuitive feeling as to the proper measure.This is closely related to(1)since we in-

tuitively measures entities by linear comparison with common standards.One feels,for example,that

two punched cards should have twice the capacity of one for information storage,and two identical

channels twice the capacity of one for transmitting information.

3.It is mathematically more suitable.Many of the limiting operations are simple in terms of the loga-

rithm but would require clumsy restatement in terms of the number of possibilities.

The choice of a logarithmic base corresponds to the choice of a unit for measuring information.If the base2is used the resulting units may be called binary digits,or more brie?y bits,a word suggested by J.W.Tukey.A device with two stable positions,such as a relay or a?ip-?op circuit,can store one bit of information.N such devices can store N bits,since the total number of possible states is2N and log22N N.

If the base10is used the units may be called decimal digits.Since

log2M log10M log102

332log10M

1Nyquist,H.,“Certain Factors Affecting Telegraph Speed,”Bell System Technical Journal,April1924,p.324;“Certain Topics in Telegraph Transmission Theory,”A.I.E.E.Trans.,v.47,April1928,p.617.

2Hartley,R.V.L.,“Transmission of Information,”Bell System Technical Journal,July1928,p.535.

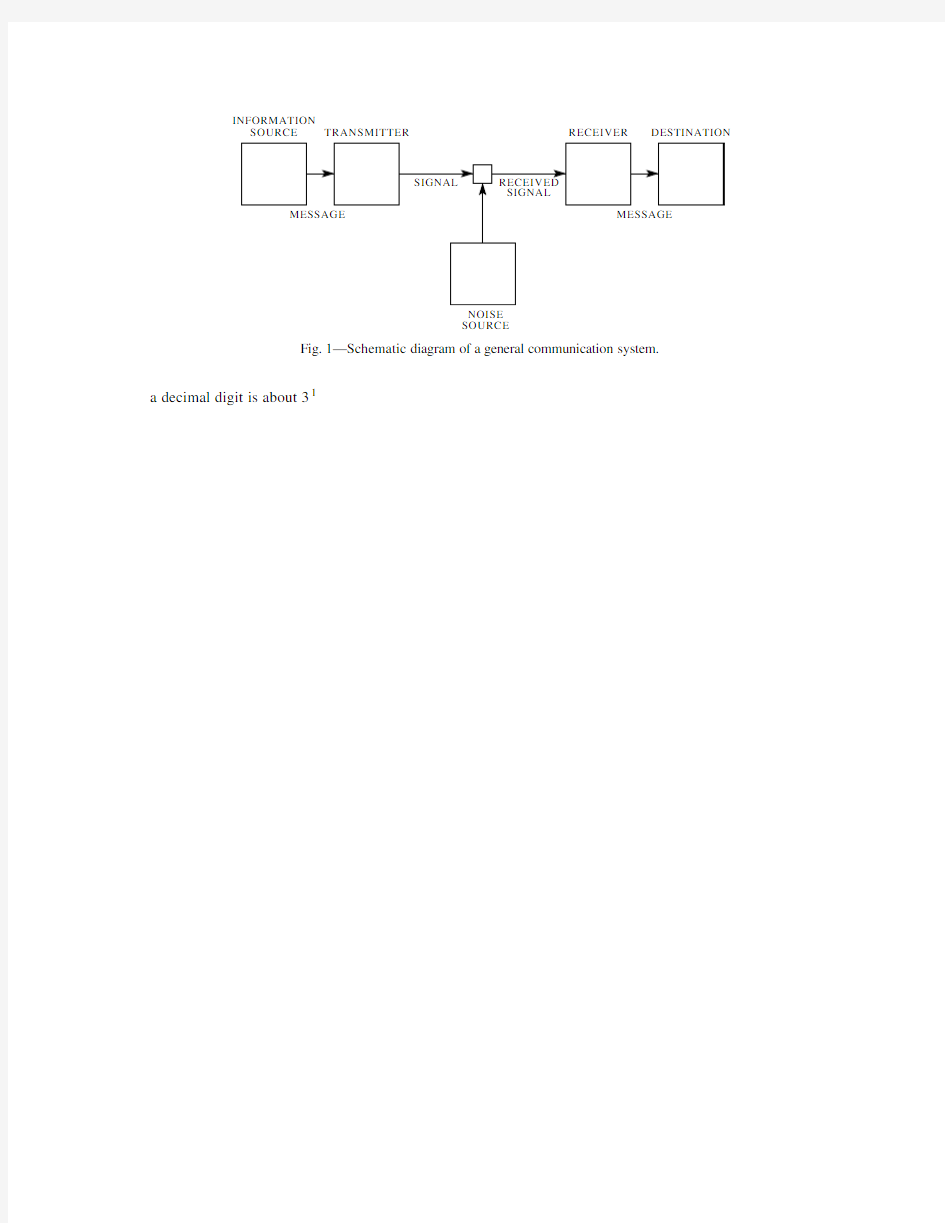

INFORMATION

SOURCE TRANSMITTER RECEIVER DESTINATION

NOISE

SOURCE

Fig.1—Schematic diagram of a general communication system.

a decimal digit is about31

physical counterparts.We may roughly classify communication systems into three main categories:discrete, continuous and mixed.By a discrete system we will mean one in which both the message and the signal are a sequence of discrete symbols.A typical case is telegraphy where the message is a sequence of letters

and the signal a sequence of dots,dashes and spaces.A continuous system is one in which the message and signal are both treated as continuous functions,e.g.,radio or television.A mixed system is one in which both discrete and continuous variables appear,e.g.,PCM transmission of speech.

We?rst consider the discrete case.This case has applications not only in communication theory,but also in the theory of computing machines,the design of telephone exchanges and other?elds.In addition

the discrete case forms a foundation for the continuous and mixed cases which will be treated in the second half of the paper.

PART I:DISCRETE NOISELESS SYSTEMS

1.T HE D ISCRETE N OISELESS C HANNEL

Teletype and telegraphy are two simple examples of a discrete channel for transmitting information.Gen-erally,a discrete channel will mean a system whereby a sequence of choices from a?nite set of elementary

symbols S1S n can be transmitted from one point to another.Each of the symbols S i is assumed to have a certain duration in time t i seconds(not necessarily the same for different S i,for example the dots and

dashes in telegraphy).It is not required that all possible sequences of the S i be capable of transmission on the system;certain sequences only may be allowed.These will be possible signals for the channel.Thus in telegraphy suppose the symbols are:(1)A dot,consisting of line closure for a unit of time and then line open for a unit of time;(2)A dash,consisting of three time units of closure and one unit open;(3)A letter space consisting of,say,three units of line open;(4)A word space of six units of line open.We might place the restriction on allowable sequences that no spaces follow each other(for if two letter spaces are adjacent, it is identical with a word space).The question we now consider is how one can measure the capacity of such a channel to transmit information.

In the teletype case where all symbols are of the same duration,and any sequence of the32symbols is allowed the answer is easy.Each symbol represents?ve bits of information.If the system transmits n symbols per second it is natural to say that the channel has a capacity of5n bits per second.This does not mean that the teletype channel will always be transmitting information at this rate—this is the maximum possible rate and whether or not the actual rate reaches this maximum depends on the source of information which feeds the channel,as will appear later.

In the more general case with different lengths of symbols and constraints on the allowed sequences,we make the following de?nition:

De?nition:The capacity C of a discrete channel is given by

log N T

C Lim

T∞

and therefore

C log X0

In case there are restrictions on allowed sequences we may still often obtain a difference equation of this type and?nd C from the characteristic equation.In the telegraphy case mentioned above

N t N t2N t4N t5N t7N t8N t10

as we see by counting sequences of symbols according to the last or next to the last symbol occurring. Hence C is log0where0is the positive root of12457810.Solving this we?nd C0539.

A very general type of restriction which may be placed on allowed sequences is the following:We imagine a number of possible states a1a2a m.For each state only certain symbols from the set S1S n can be transmitted(different subsets for the different states).When one of these has been transmitted the state changes to a new state depending both on the old state and the particular symbol transmitted.The telegraph case is a simple example of this.There are two states depending on whether or not a space was the last symbol transmitted.If so,then only a dot or a dash can be sent next and the state always changes. If not,any symbol can be transmitted and the state changes if a space is sent,otherwise it remains the same. The conditions can be indicated in a linear graph as shown in Fig.2.The junction points correspond to

the

Fig.2—Graphical representation of the constraints on telegraph symbols.

states and the lines indicate the symbols possible in a state and the resulting state.In Appendix1it is shown that if the conditions on allowed sequences can be described in this form C will exist and can be calculated in accordance with the following result:

Theorem1:Let b s i j be the duration of the s th symbol which is allowable in state i and leads to state j. Then the channel capacity C is equal to log W where W is the largest real root of the determinant equation:

∑s W b

s

i j i j0

where i j1if i j and is zero otherwise.

For example,in the telegraph case(Fig.2)the determinant is:

1W2W4

W3W6W2W410

On expansion this leads to the equation given above for this case.

2.T HE D ISCRETE S OURCE OF I NFORMATION

We have seen that under very general conditions the logarithm of the number of possible signals in a discrete channel increases linearly with time.The capacity to transmit information can be speci?ed by giving this rate of increase,the number of bits per second required to specify the particular signal used.

We now consider the information source.How is an information source to be described mathematically, and how much information in bits per second is produced in a given source?The main point at issue is the effect of statistical knowledge about the source in reducing the required capacity of the channel,by the use

of proper encoding of the information.In telegraphy,for example,the messages to be transmitted consist of sequences of letters.These sequences,however,are not completely random.In general,they form sentences and have the statistical structure of,say,English.The letter E occurs more frequently than Q,the sequence TH more frequently than XP,etc.The existence of this structure allows one to make a saving in time(or channel capacity)by properly encoding the message sequences into signal sequences.This is already done to a limited extent in telegraphy by using the shortest channel symbol,a dot,for the most common English letter E;while the infrequent letters,Q,X,Z are represented by longer sequences of dots and dashes.This idea is carried still further in certain commercial codes where common words and phrases are represented by four-or?ve-letter code groups with a considerable saving in average time.The standardized greeting and anniversary telegrams now in use extend this to the point of encoding a sentence or two into a relatively short sequence of numbers.

We can think of a discrete source as generating the message,symbol by symbol.It will choose succes-sive symbols according to certain probabilities depending,in general,on preceding choices as well as the particular symbols in question.A physical system,or a mathematical model of a system which produces such a sequence of symbols governed by a set of probabilities,is known as a stochastic process.3We may consider a discrete source,therefore,to be represented by a stochastic process.Conversely,any stochastic process which produces a discrete sequence of symbols chosen from a?nite set may be considered a discrete source.This will include such cases as:

1.Natural written languages such as English,German,Chinese.

2.Continuous information sources that have been rendered discrete by some quantizing process.For

example,the quantized speech from a PCM transmitter,or a quantized television signal.

3.Mathematical cases where we merely de?ne abstractly a stochastic process which generates a se-

quence of symbols.The following are examples of this last type of source.

(A)Suppose we have?ve letters A,B,C,D,E which are chosen each with probability.2,successive

choices being independent.This would lead to a sequence of which the following is a typical

example.

B D

C B C E C C C A

D C B D D A A

E C E E A

A B B D A E E C A C E E B A E E C B C E A D.

This was constructed with the use of a table of random numbers.4

(B)Using the same?ve letters let the probabilities be.4,.1,.2,.2,.1,respectively,with successive

choices independent.A typical message from this source is then:

A A A C D C

B D

C E A A

D A D A C

E D A

E A D C A B E D A D D C E C A A A A A D.

(C)A more complicated structure is obtained if successive symbols are not chosen independently

but their probabilities depend on preceding letters.In the simplest case of this type a choice

depends only on the preceding letter and not on ones before that.The statistical structure can

then be described by a set of transition probabilities p i j,the probability that letter i is followed

by letter j.The indices i and j range over all the possible symbols.A second equivalent way of

specifying the structure is to give the“digram”probabilities p i j,i.e.,the relative frequency of

the digram i j.The letter frequencies p i,(the probability of letter i),the transition probabilities 3See,for example,S.Chandrasekhar,“Stochastic Problems in Physics and Astronomy,”Reviews of Modern Physics,v.15,No.1, January1943,p.1.

4Kendall and Smith,Tables of Random Sampling Numbers,Cambridge,1939.

p i j and the digram probabilities p i j are related by the following formulas:

p i ∑j p i j

∑j p j i ∑j p j p j i

p i j p i p i j

∑j p i

j ∑i p i ∑i j

p i j 1As a speci?c example suppose there are three letters A,B,C with the probability tables:

p i j A B C 045i B 21151p i j

A

15

18270C 27

4135A typical message from this source is the following:

A B B A B A B A B A B A B A B B B A B B B B B A B A B A B A B A B B B A C A C A B

B A B B B B A B B A B A

C B B B A B A.

The next increase in complexity would involve trigram frequencies but no more.The choice of

a letter would depend on the preceding two letters but not on the message before that point.A set of trigram frequencies p i j k or equivalently a set of transition probabilities p i j k would be required.Continuing in this way one obtains successively more complicated stochastic pro-cesses.In the general n -gram case a set of n -gram probabilities p i 1i 2i n or of transition probabilities p i 1i 2i n 1i n is required to specify the statistical structure.

(D)Stochastic processes can also be de?ned which produce a text consisting of a sequence of

“words.”Suppose there are ?ve letters A,B,C,D,E and 16“words”in the language with associated probabilities:

.10A

.16BEBE .11CABED .04DEB .04ADEB

.04BED .05CEED .15DEED .05ADEE

.02BEED .08DAB .01EAB .01BADD .05CA .04DAD .05EE

Suppose successive “words”are chosen independently and are separated by a space.A typical message might be:

DAB EE A BEBE DEED DEB ADEE ADEE EE DEB BEBE BEBE BEBE ADEE BED DEED DEED CEED ADEE A DEED DEED BEBE CABED BEBE BED DAB DEED ADEB.

If all the words are of ?nite length this process is equivalent to one of the preceding type,but the description may be simpler in terms of the word structure and probabilities.We may also generalize here and introduce transition probabilities between words,etc.

These arti?cial languages are useful in constructing simple problems and examples to illustrate vari-ous possibilities.We can also approximate to a natural language by means of a series of simple arti?cial languages.The zero-order approximation is obtained by choosing all letters with the same probability and independently.The ?rst-order approximation is obtained by choosing successive letters independently but each letter having the same probability that it has in the natural language.5Thus,in the ?rst-order ap-proximation to English,E is chosen with probability .12(its frequency in normal English)and W with probability .02,but there is no in?uence between adjacent letters and no tendency to form the preferred

5Letter,

digram and trigram frequencies are given in Secret and Urgent by Fletcher Pratt,Blue Ribbon Books,1939.Word frequen-cies are tabulated in Relative Frequency of English Speech Sounds,G.Dewey,Harvard University Press,1923.

digrams such as TH,ED,etc.In the second-order approximation,digram structure is introduced.After a

letter is chosen,the next one is chosen in accordance with the frequencies with which the various letters

follow the?rst one.This requires a table of digram frequencies p i j.In the third-order approximation, trigram structure is introduced.Each letter is chosen with probabilities which depend on the preceding two

letters.

3.T HE S ERIES OF A PPROXIMATIONS TO E NGLISH

To give a visual idea of how this series of processes approaches a language,typical sequences in the approx-

imations to English have been constructed and are given below.In all cases we have assumed a27-symbol “alphabet,”the26letters and a space.

1.Zero-order approximation(symbols independent and equiprobable).

XFOML RXKHRJFFJUJ ZLPWCFWKCYJ FFJEYVKCQSGHYD QPAAMKBZAACIBZL-

HJQD.

2.First-order approximation(symbols independent but with frequencies of English text).

OCRO HLI RGWR NMIELWIS EU LL NBNESEBY A TH EEI ALHENHTTPA OOBTTV A

NAH BRL.

3.Second-order approximation(digram structure as in English).

ON IE ANTSOUTINYS ARE T INCTORE ST BE S DEAMY ACHIN D ILONASIVE TU-

COOWE AT TEASONARE FUSO TIZIN ANDY TOBE SEACE CTISBE.

4.Third-order approximation(trigram structure as in English).

IN NO IST LAT WHEY CRATICT FROURE BIRS GROCID PONDENOME OF DEMONS-

TURES OF THE REPTAGIN IS REGOACTIONA OF CRE.

5.First-order word approximation.Rather than continue with tetragram,,n-gram structure it is easier

and better to jump at this point to word units.Here words are chosen independently but with their appropriate frequencies.

REPRESENTING AND SPEEDILY IS AN GOOD APT OR COME CAN DIFFERENT NAT-

URAL HERE HE THE A IN CAME THE TO OF TO EXPERT GRAY COME TO FURNISHES

THE LINE MESSAGE HAD BE THESE.

6.Second-order word approximation.The word transition probabilities are correct but no further struc-

ture is included.

THE HEAD AND IN FRONTAL ATTACK ON AN ENGLISH WRITER THAT THE CHAR-

ACTER OF THIS POINT IS THEREFORE ANOTHER METHOD FOR THE LETTERS THAT

THE TIME OF WHO EVER TOLD THE PROBLEM FOR AN UNEXPECTED.

The resemblance to ordinary English text increases quite noticeably at each of the above steps.Note that these samples have reasonably good structure out to about twice the range that is taken into account in their construction.Thus in(3)the statistical process insures reasonable text for two-letter sequences,but four-letter sequences from the sample can usually be?tted into good sentences.In(6)sequences of four or more words can easily be placed in sentences without unusual or strained constructions.The particular sequence of ten words“attack on an English writer that the character of this”is not at all unreasonable.It appears then that a suf?ciently complex stochastic process will give a satisfactory representation of a discrete source.

The?rst two samples were constructed by the use of a book of random numbers in conjunction with

(for example2)a table of letter frequencies.This method might have been continued for(3),(4)and(5), since digram,trigram and word frequency tables are available,but a simpler equivalent method was used.

To construct(3)for example,one opens a book at random and selects a letter at random on the page.This letter is recorded.The book is then opened to another page and one reads until this letter is encountered. The succeeding letter is then recorded.Turning to another page this second letter is searched for and the succeeding letter recorded,etc.A similar process was used for(4),(5)and(6).It would be interesting if further approximations could be constructed,but the labor involved becomes enormous at the next stage.

4.G RAPHICAL R EPRESENTATION OF A M ARKOFF P ROCESS

Stochastic processes of the type described above are known mathematically as discrete Markoff processes and have been extensively studied in the literature.6The general case can be described as follows:There exist a?nite number of possible“states”of a system;S1S2S n.In addition there is a set of transition probabilities;p i j the probability that if the system is in state S i it will next go to state S j.To make this

Markoff process into an information source we need only assume that a letter is produced for each transition from one state to another.The states will correspond to the“residue of in?uence”from preceding letters.

The situation can be represented graphically as shown in Figs.3,4and5.The“states”are the

junction

Fig.3—A graph corresponding to the source in example B.

points in the graph and the probabilities and letters produced for a transition are given beside the correspond-ing line.Figure3is for the example B in Section2,while Fig.4corresponds to the example C.In Fig.

3

Fig.4—A graph corresponding to the source in example C.

there is only one state since successive letters are independent.In Fig.4there are as many states as letters. If a trigram example were constructed there would be at most n2states corresponding to the possible pairs of letters preceding the one being chosen.Figure5is a graph for the case of word structure in example D. Here S corresponds to the“space”symbol.

5.E RGODIC AND M IXED S OURCES

As we have indicated above a discrete source for our purposes can be considered to be represented by a Markoff process.Among the possible discrete Markoff processes there is a group with special properties of signi?cance in communication theory.This special class consists of the“ergodic”processes and we shall call the corresponding sources ergodic sources.Although a rigorous de?nition of an ergodic process is somewhat involved,the general idea is simple.In an ergodic process every sequence produced by the process 6For a detailed treatment see M.Fr′e chet,M′e thode des fonctions arbitraires.Th′e orie des′e v′e nements en cha??ne dans le cas d’un nombre?ni d’′e tats possibles.Paris,Gauthier-Villars,1938.

is the same in statistical properties.Thus the letter frequencies,digram frequencies,etc.,obtained from particular sequences,will,as the lengths of the sequences increase,approach de?nite limits independent of the particular sequence.Actually this is not true of every sequence but the set for which it is false has probability zero.Roughly the ergodic property means statistical homogeneity.

All the examples of arti?cial languages given above are ergodic.This property is related to the structure of the corresponding graph.If the graph has the following two properties7the corresponding process will be ergodic:

1.The graph does not consist of two isolated parts A and B such that it is impossible to go from junction

points in part A to junction points in part B along lines of the graph in the direction of arrows and also impossible to go from junctions in part B to junctions in part A.

2.A closed series of lines in the graph with all arrows on the lines pointing in the same orientation will

be called a“circuit.”The“length”of a circuit is the number of lines in it.Thus in Fig.5series BEBES is a circuit of length5.The second property required is that the greatest common divisor of the lengths of all circuits in the graph be

one.

If the?rst condition is satis?ed but the second one violated by having the greatest common divisor equal to d1,the sequences have a certain type of periodic structure.The various sequences fall into d different classes which are statistically the same apart from a shift of the origin(i.e.,which letter in the sequence is called letter1).By a shift of from0up to d1any sequence can be made statistically equivalent to any other.A simple example with d2is the following:There are three possible letters a b c.Letter a is

followed with either b or c with probabilities1

3respectively.Either b or c is always followed by letter

a.Thus a typical sequence is

a b a c a c a c a b a c a b a b a c a c

This type of situation is not of much importance for our work.

If the?rst condition is violated the graph may be separated into a set of subgraphs each of which satis?es the?rst condition.We will assume that the second condition is also satis?ed for each subgraph.We have in this case what may be called a“mixed”source made up of a number of pure components.The components correspond to the various subgraphs.If L1,L2,L3are the component sources we may write

L p1L1p2L2p3L3

7These are restatements in terms of the graph of conditions given in Fr′e chet.

where p i is the probability of the component source L i.

Physically the situation represented is this:There are several different sources L1,L2,L3which are each of homogeneous statistical structure(i.e.,they are ergodic).We do not know a priori which is to be used,but once the sequence starts in a given pure component L i,it continues inde?nitely according to the statistical structure of that component.

As an example one may take two of the processes de?ned above and assume p12and p28.A sequence from the mixed source

L2L18L2

would be obtained by choosing?rst L1or L2with probabilities.2and.8and after this choice generating a sequence from whichever was chosen.

Except when the contrary is stated we shall assume a source to be ergodic.This assumption enables one to identify averages along a sequence with averages over the ensemble of possible sequences(the probability of a discrepancy being zero).For example the relative frequency of the letter A in a particular in?nite sequence will be,with probability one,equal to its relative frequency in the ensemble of sequences.

If P i is the probability of state i and p i j the transition probability to state j,then for the process to be stationary it is clear that the P i must satisfy equilibrium conditions:

P j∑

i

P i p i j

In the ergodic case it can be shown that with any starting conditions the probabilities P j N of being in state j after N symbols,approach the equilibrium values as N∞.

6.C HOICE,U NCERTAINTY AND E NTROPY

We have represented a discrete information source as a Markoff process.Can we de?ne a quantity which will measure,in some sense,how much information is“produced”by such a process,or better,at what rate information is produced?

Suppose we have a set of possible events whose probabilities of occurrence are p1p2p n.These probabilities are known but that is all we know concerning which event will occur.Can we?nd a measure of how much“choice”is involved in the selection of the event or of how uncertain we are of the outcome?

If there is such a measure,say H p1p2p n,it is reasonable to require of it the following properties:

1.H should be continuous in the p i.

2.If all the p i are equal,p i1

2,p21

6

.On the right we?rst choose between two possibilities each with

probability1

3

,1

21

6

H1

2

1

3

1

2

is because this second choice only occurs half the time.

In Appendix2,the following result is established:

Theorem2:The only H satisfying the three above assumptions is of the form:

H K

n

∑

i1

p i log p i

where K is a positive constant.

This theorem,and the assumptions required for its proof,are in no way necessary for the present theory.

It is given chie?y to lend a certain plausibility to some of our later de?nitions.The real justi?cation of these de?nitions,however,will reside in their implications.

Quantities of the form H∑p i log p i(the constant K merely amounts to a choice of a unit of measure) play a central role in information theory as measures of information,choice and uncertainty.The form of H will be recognized as that of entropy as de?ned in certain formulations of statistical mechanics8where p i is

the probability of a system being in cell i of its phase space.H is then,for example,the H in Boltzmann’s famous H theorem.We shall call H∑p i log p i the entropy of the set of probabilities p1p n.If x is a chance variable we will write H x for its entropy;thus x is not an argument of a function but a label for a

number,to differentiate it from H y say,the entropy of the chance variable y.

The entropy in the case of two possibilities with probabilities p and q1p,namely

H p log p q log q

is plotted in Fig.7as a function of p.

H

BITS

p

Fig.7—Entropy in the case of two possibilities with probabilities p and1p.

The quantity H has a number of interesting properties which further substantiate it as a reasonable measure of choice or information.

1.H0if and only if all the p i but one are zero,this one having the value unity.Thus only when we

are certain of the outcome does H vanish.Otherwise H is positive.

2.For a given n,H is a maximum and equal to log n when all the p i are equal(i.e.,1

3.Suppose there are two events,x and y,in question with m possibilities for the?rst and n for the second. Let p i j be the probability of the joint occurrence of i for the?rst and j for the second.The entropy of the joint event is

H x y∑

i j

p i j log p i j

while

H x∑

i j p i j log∑

j

p i j

H y∑

i j p i j log∑

i

p i j

It is easily shown that

H x y H x H y

with equality only if the events are independent(i.e.,p i j p i p j).The uncertainty of a joint event is less than or equal to the sum of the individual uncertainties.

4.Any change toward equalization of the probabilities p1p2p n increases H.Thus if p1p2and we increase p1,decreasing p2an equal amount so that p1and p2are more nearly equal,then H increases. More generally,if we perform any“averaging”operation on the p i of the form

p i∑

j

a i j p j

where∑i a i j∑j a i j1,and all a i j0,then H increases(except in the special case where this transfor-mation amounts to no more than a permutation of the p j with H of course remaining the same).

5.Suppose there are two chance events x and y as in3,not necessarily independent.For any particular value i that x can assume there is a conditional probability p i j that y has the value j.This is given by

p i j

p i j

7.T HE E NTROPY OF AN I NFORMATION S OURCE

Consider a discrete source of the?nite state type considered above.For each possible state i there will be a set of probabilities p i j of producing the various possible symbols j.Thus there is an entropy H i for each state.The entropy of the source will be de?ned as the average of these H i weighted in accordance with the probability of occurrence of the states in question:

H∑

i

P i H i

∑

i j

P i p i j log p i j

This is the entropy of the source per symbol of text.If the Markoff process is proceeding at a de?nite time rate there is also an entropy per second

H∑

i

f i H i

where f i is the average frequency(occurrences per second)of state i.Clearly

H mH

where m is the average number of symbols produced per second.H or H measures the amount of informa-tion generated by the source per symbol or per second.If the logarithmic base is2,they will represent bits per symbol or per second.

If successive symbols are independent then H is simply∑p i log p i where p i is the probability of sym-bol i.Suppose in this case we consider a long message of N symbols.It will contain with high probability about p1N occurrences of the?rst symbol,p2N occurrences of the second,etc.Hence the probability of this particular message will be roughly

p p p1N

1p p2N

2

p p n N n

or

log p N∑

i

p i log p i

log p NH

H

log1p

N

H In other words we are almost certain to have

log p1

Theorem4:

Lim

N∞

log n q

N is the number of bits per symbol for

the speci?cation.The theorem says that for large N this will be independent of q and equal to H.The rate of growth of the logarithm of the number of reasonably probable sequences is given by H,regardless of our interpretation of“reasonably probable.”Due to these results,which are proved in Appendix3,it is possible for most purposes to treat the long sequences as though there were just2HN of them,each with a probability 2HN.

The next two theorems show that H and H can be determined by limiting operations directly from the statistics of the message sequences,without reference to the states and transition probabilities between states.

Theorem5:Let p B i be the probability of a sequence B i of symbols from the source.Let

G N

1

N

N ∑

n1

F n

F N

G N

and Lim N∞F N H.

These results are derived in Appendix3.They show that a series of approximations to H can be obtained

by considering only the statistical structure of the sequences extending over12N symbols.F N is the

better approximation.In fact F N is the entropy of the N th order approximation to the source of the type discussed above.If there are no statistical in?uences extending over more than N symbols,that is if the

conditional probability of the next symbol knowing the preceding N1is not changed by a knowledge of

any before that,then F N H.F N of course is the conditional entropy of the next symbol when the N1 preceding ones are known,while G N is the entropy per symbol of blocks of N symbols.

The ratio of the entropy of a source to the maximum value it could have while still restricted to the same symbols will be called its relative entropy.This is the maximum compression possible when we encode into the same alphabet.One minus the relative entropy is the redundancy.The redundancy of ordinary English, not considering statistical structure over greater distances than about eight letters,is roughly50%.This means that when we write English half of what we write is determined by the structure of the language and half is chosen freely.The?gure50%was found by several independent methods which all gave results in

this neighborhood.One is by calculation of the entropy of the approximations to English.A second method

is to delete a certain fraction of the letters from a sample of English text and then let someone attempt to

restore them.If they can be restored when50%are deleted the redundancy must be greater than50%.A third method depends on certain known results in cryptography.

Two extremes of redundancy in English prose are represented by Basic English and by James Joyce’s

book“Finnegans Wake”.The Basic English vocabulary is limited to850words and the redundancy is very high.This is re?ected in the expansion that occurs when a passage is translated into Basic English.Joyce

on the other hand enlarges the vocabulary and is alleged to achieve a compression of semantic content.

The redundancy of a language is related to the existence of crossword puzzles.If the redundancy is

zero any sequence of letters is a reasonable text in the language and any two-dimensional array of letters

forms a crossword puzzle.If the redundancy is too high the language imposes too many constraints for large crossword puzzles to be possible.A more detailed analysis shows that if we assume the constraints imposed

by the language are of a rather chaotic and random nature,large crossword puzzles are just possible when

the redundancy is50%.If the redundancy is33%,three-dimensional crossword puzzles should be possible, etc.

8.R EPRESENTATION OF THE E NCODING AND D ECODING O PERATIONS

We have yet to represent mathematically the operations performed by the transmitter and receiver in en-

coding and decoding the information.Either of these will be called a discrete transducer.The input to the transducer is a sequence of input symbols and its output a sequence of output symbols.The transducer may

have an internal memory so that its output depends not only on the present input symbol but also on the past

history.We assume that the internal memory is?nite,i.e.,there exist a?nite number m of possible states of the transducer and that its output is a function of the present state and the present input symbol.The next

state will be a second function of these two quantities.Thus a transducer can be described by two functions:

y n f x n n

n1g x n n

where

x n is the n th input symbol,

n is the state of the transducer when the n th input symbol is introduced,

y n is the output symbol(or sequence of output symbols)produced when x n is introduced if the state is n.

If the output symbols of one transducer can be identi?ed with the input symbols of a second,they can be

connected in tandem and the result is also a transducer.If there exists a second transducer which operates on the output of the?rst and recovers the original input,the?rst transducer will be called non-singular and

the second will be called its inverse.

Theorem7:The output of a?nite state transducer driven by a?nite state statistical source is a?nite state statistical source,with entropy(per unit time)less than or equal to that of the input.If the transducer is non-singular they are equal.

Let represent the state of the source,which produces a sequence of symbols x i;and let be the state of

the transducer,which produces,in its output,blocks of symbols y j.The combined system can be represented by the“product state space”of pairs.Two points in the space11and22,are connected by a line if1can produce an x which changes1to2,and this line is given the probability of that x in this

case.The line is labeled with the block of y j symbols produced by the transducer.The entropy of the output can be calculated as the weighted sum over the states.If we sum?rst on each resulting term is less than or equal to the corresponding term for,hence the entropy is not increased.If the transducer is non-singular let its output be connected to the inverse transducer.If H1,H2and H3are the output entropies of the source, the?rst and second transducers respectively,then H1H2H3H1and therefore H1H2.

Suppose we have a system of constraints on possible sequences of the type which can be represented by a linear graph as in Fig.2.If probabilities p s i j were assigned to the various lines connecting state i to state j this would become a source.There is one particular assignment which maximizes the resulting entropy(see Appendix4).

Theorem8:Let the system of constraints considered as a channel have a capacity C log W.If we assign

p s i j

B j

H

symbols per second over the channel where is arbitrarily small.It is not possible to transmit at an average rate greater than

C

H cannot be exceeded,may be proved by noting that the entropy

of the channel input per second is equal to that of the source,since the transmitter must be non-singular,and also this entropy cannot exceed the channel capacity.Hence H C and the number of symbols per second H H C H.

The?rst part of the theorem will be proved in two different ways.The?rst method is to consider the set of all sequences of N symbols produced by the source.For N large we can divide these into two groups, one containing less than2H N members and the second containing less than2RN members(where R is the logarithm of the number of different symbols)and having a total probability less than.As N increases and approach zero.The number of signals of duration T in the channel is greater than2C T with small when T is large.if we choose

T

H

C

N

where is small.The mean rate of transmission in message symbols per second will then be greater than

1T

N 1

1H

C

1

As N increases,and approach zero and the rate approaches

C

p s

m s1log21

2m s

p s1

2m s larger and their binary expansions therefore differ in the?rst m s places.Consequently all

the codes are different and it is possible to recover the message from its code.If the channel sequences are not already sequences of binary digits,they can be ascribed binary numbers in an arbitrary fashion and the binary code thus translated into signals suitable for the channel.

The average number H of binary digits used per symbol of original message is easily estimated.We have

H

1

N ∑log21

N

∑m s p s1

p s

p s

and therefore,

G N H G N

1

N plus the difference between the true entropy H and the entropy G N calculated for

sequences of length N.The per cent excess time needed over the ideal is therefore less than

G N

HN

1

This method of encoding is substantially the same as one found independently by R.M.Fano.9His method is to arrange the messages of length N in order of decreasing probability.Divide this series into two groups of as nearly equal probability as possible.If the message is in the?rst group its?rst binary digit will be0,otherwise1.The groups are similarly divided into subsets of nearly equal probability and the particular subset determines the second binary digit.This process is continued until each subset contains only one message.It is easily seen that apart from minor differences(generally in the last digit)this amounts to the same thing as the arithmetic process described above.

10.D ISCUSSION AND E XAMPLES

In order to obtain the maximum power transfer from a generator to a load,a transformer must in general be introduced so that the generator as seen from the load has the load resistance.The situation here is roughly analogous.The transducer which does the encoding should match the source to the channel in a statistical sense.The source as seen from the channel through the transducer should have the same statistical structure 9Technical Report No.65,The Research Laboratory of Electronics,M.I.T.,March17,1949.

as the source which maximizes the entropy in the channel.The content of Theorem9is that,although an exact match is not in general possible,we can approximate it as closely as desired.The ratio of the actual rate of transmission to the capacity C may be called the ef?ciency of the coding system.This is of course equal to the ratio of the actual entropy of the channel symbols to the maximum possible entropy.

In general,ideal or nearly ideal encoding requires a long delay in the transmitter and receiver.In the noiseless case which we have been considering,the main function of this delay is to allow reasonably good matching of probabilities to corresponding lengths of sequences.With a good code the logarithm of the reciprocal probability of a long message must be proportional to the duration of the corresponding signal,in fact

log p1

2,1

8

,1

2log1

4

log1

8

log1

4

bits per symbol

Thus we can approximate a coding system to encode messages from this source into binary digits with an average of7

211

8

37

2

,1

4

binary symbols per original letter,the entropies on a time

basis are the same.The maximum possible entropy for the original set is log42,occurring when A,B,C,

D have probabilities1

4,1

4

.Hence the relative entropy is7

This double process then encodes the original message into the same symbols but with an average compres-sion ratio7

p

In such a case one can construct a fairly good coding of the message on a0,1channel by sending a special sequence,say0000,for the infrequent symbol A and then a sequence indicating the number of B’s following it.This could be indicated by the binary representation with all numbers containing the special sequence deleted.All numbers up to16are represented as usual;16is represented by the next binary number after16 which does not contain four zeros,namely1710001,etc.

It can be shown that as p0the coding approaches ideal provided the length of the special sequence is properly adjusted.

PART II:THE DISCRETE CHANNEL WITH NOISE

11.R EPRESENTATION OF A N OISY D ISCRETE C HANNEL

We now consider the case where the signal is perturbed by noise during transmission or at one or the other of the terminals.This means that the received signal is not necessarily the same as that sent out by the transmitter.Two cases may be distinguished.If a particular transmitted signal always produces the same received signal,i.e.,the received signal is a de?nite function of the transmitted signal,then the effect may be called distortion.If this function has an inverse—no two transmitted signals producing the same received signal—distortion may be corrected,at least in principle,by merely performing the inverse functional operation on the received signal.

The case of interest here is that in which the signal does not always undergo the same change in trans-mission.In this case we may assume the received signal E to be a function of the transmitted signal S and a second variable,the noise N.

E f S N

The noise is considered to be a chance variable just as the message was above.In general it may be repre-sented by a suitable stochastic process.The most general type of noisy discrete channel we shall consider is a generalization of the?nite state noise-free channel described previously.We assume a?nite number of states and a set of probabilities

p i j

This is the probability,if the channel is in state and symbol i is transmitted,that symbol j will be received and the channel left in state.Thus and range over the possible states,i over the possible transmitted signals and j over the possible received signals.In the case where successive symbols are independently per-turbed by the noise there is only one state,and the channel is described by the set of transition probabilities p i j,the probability of transmitted symbol i being received as j.

If a noisy channel is fed by a source there are two statistical processes at work:the source and the noise. Thus there are a number of entropies that can be calculated.First there is the entropy H x of the source or of the input to the channel(these will be equal if the transmitter is non-singular).The entropy of the output of the channel,i.e.,the received signal,will be denoted by H y.In the noiseless case H y H x. The joint entropy of input and output will be H xy.Finally there are two conditional entropies H x y and H y x,the entropy of the output when the input is known and conversely.Among these quantities we have the relations

H x y H x H x y H y H y x

All of these entropies can be measured on a per-second or a per-symbol basis.

12.E QUIVOCATION AND C HANNEL C APACITY

If the channel is noisy it is not in general possible to reconstruct the original message or the transmitted signal with certainty by any operation on the received signal E.There are,however,ways of transmitting the information which are optimal in combating noise.This is the problem which we now consider.

Suppose there are two possible symbols0and1,and we are transmitting at a rate of1000symbols per second with probabilities p0p11

2whatever was transmitted and similarly for0.

Then about half of the received symbols are correct due to chance alone,and we would be giving the system

credit for transmitting500bits per second while actually no information is being transmitted at all.Equally “good”transmission would be obtained by dispensing with the channel entirely and?ipping a coin at the receiving point.

Evidently the proper correction to apply to the amount of information transmitted is the amount of this information which is missing in the received signal,or alternatively the uncertainty when we have received a signal of what was actually sent.From our previous discussion of entropy as a measure of uncertainty it seems reasonable to use the conditional entropy of the message,knowing the received signal,as a measure of this missing information.This is indeed the proper de?nition,as we shall see later.Following this idea the rate of actual transmission,R,would be obtained by subtracting from the rate of production(i.e.,the entropy of the source)the average rate of conditional entropy.

R H x H y x

The conditional entropy H y x will,for convenience,be called the equivocation.It measures the average ambiguity of the received signal.

In the example considered above,if a0is received the a posteriori probability that a0was transmitted is.99,and that a1was transmitted is.01.These?gures are reversed if a1is received.Hence

H y x99log99001log001

081bits/symbol

or81bits per second.We may say that the system is transmitting at a rate100081919bits per second. In the extreme case where a0is equally likely to be received as a0or1and similarly for1,the a posteriori

probabilities are1

2

and

H y x1212

1bit per symbol

or1000bits per second.The rate of transmission is then0as it should be.

The following theorem gives a direct intuitive interpretation of the equivocation and also serves to justify it as the unique appropriate measure.We consider a communication system and an observer(or auxiliary device)who can see both what is sent and what is recovered(with errors due to noise).This observer notes the errors in the recovered message and transmits data to the receiving point over a“correction channel”to enable the receiver to correct the errors.The situation is indicated schematically in Fig.8.

Theorem10:If the correction channel has a capacity equal to H y x it is possible to so encode the correction data as to send it over this channel and correct all but an arbitrarily small fraction of the errors. This is not possible if the channel capacity is less than H y x.